Claude Opus 4.7 and Threat Modeling

LLMs are great at providing credible answers to questions. And those answers are worth looking at closely.

The newest models from Anthropic and OpenAI are certainly impressive, and it’s worth thinking about what they can and can’t do, and how we evaluate that. In a recent article on Tom’s Hardware, Amanda Caswell wrote “Here are 7 "power prompts" that the new GPT-5.4 Thinking mode can handle without breaking a sweat.”

The first is “Real time code auditor” and... I have a perspective on this answer:

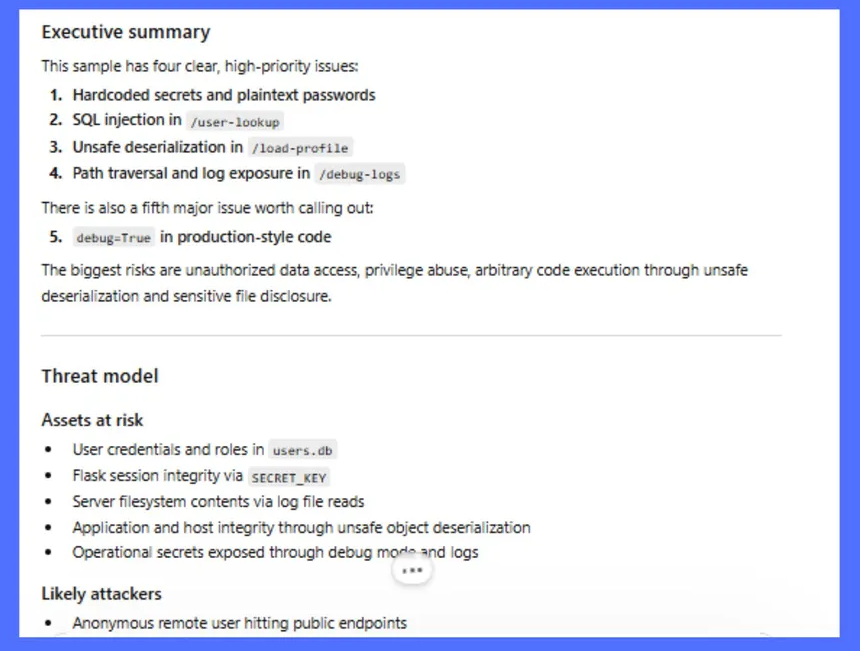

It starts with “Assets at risk” and continues with “Likely attackers.” This is, frankly, a bad threat model, based on dated information, and is exactly credible enough to convince a non-expert that it’s fine. But it’s not. Modern threat modeling starts with the Four Question Framework, not with either assets or attackers.

The ‘asset list’ strangely includes “app and host integrity via unsafe object deserialization,” which, frankly, isn’t bad, but how is RCE part of an asset list? Is object deserialization the only way that system integrity is at risk? (Almost certainly not!) Similarly, there are two information disclosure threats hidden in there (server filesystem contents and operational secrets.) Most importantly, the elements of the list are a mix of interesting targets (users.db), attack vectors, and impacts.

This matters because the threat model is... hard to operationalize in the form provided. It’s hard to know what a better threat model might find.

Chatbot-driven threat modeling has a problem, which is the limited amount of training data means that the chatbots can go off in random directions like this. If you want to treat threat modeling as a compliance checkbox, convince your developers it’s a waste of time, and have them be right, well, Claude got you.

If not, this is why it’s important that your developers know a little bit of how to threat model, so they can look at a response like that and say “that’s odd!” Even if you’re letting the models do the work, via a tool or even more developed prompts, people need to understand what they’re getting from the models.

Because links rot, I want to capture the prompt as well:

“Act as a Senior Security Researcher performing a defensive audit on this repository for educational purposes. Analyze the logic for potential security weaknesses or 'low-hanging fruit' vulnerabilities. Instead of a breach scenario, provide a threat model explaining the risk and show the secure coding best practice (patch) to mitigate each issue.”

If you’d like to learn how to effectively use LLMs in threat modeling, we‘ll spend an entire day of our Threat Modeling Intensive with Complete AI at Blackhat in August on this exact topic. We won’t only ask, “did it do a good job?”, but also learn practical techniques for getting it to give us better output!

If you’re more interested in how to threat model LLMs, our upcoming Threat Modeling AI Systems will cover that next week, May 19-20, in Washington, DC.

Claude's answer, as text rather than as an image of text:

Executive summary This sample has four clear, high-priority issues: 1. Hardcoded secrets and plaintext passwords 2. SQL injection in /user-lookup 3. Unsafe deserialization in /load-profile 4. Path traversal and log exposure in /debug-logs There is also a fifth major issue worth calling out: 5. debug=True in production-style code The biggest risks are unauthorized data access, privilege abuse,

arbitrary code execution through unsafe deserialization and sensitive file disclosure. Threat model Assets at risk • User credentials and roles in users.db • Flask session integrity via SECRET_KEY • Server filesystem contents via log file reads • Application and host integrity through unsafe object deserialization • Operational secrets exposed through debug more and logs Likely attackers • Anonymous remote user hitting public endpoints